From “Is AI Unethical?” to a More Dangerous Question - “The Line Between Assistance and Surrender”

From guiding AI governance to exploring human awakening — finding integrity in how we build, use, and relate to technology.

A few days ago, I found myself in a conversation that started with a simple question:

“Is using AI unethical?” (the orginal article is below)

It came from a Facebook discussion - the kind that quickly fills with strong opinions, fear, curiosity, and contradiction.

I didn’t answer straight away. Instead, I sat with it. I work in AI platforms and governance, so I think about this a lot, but hate reacting. And then, a few days later, I stepped into a three-day pro-code Generative AI workshop.

Not theory. Not headlines. Hands-on. Building. Testing. Seeing what’s now possible.

And that changed the question entirely.

Is using AI ethical? Is not the first question…

An article for Users, Developers, and conscious souls

Because when you’re inside it — when you see how quickly agents can be built, how accessible these tools have become, how easily powerful models can be orchestrated — ethics stops being abstract.

It becomes immediate. Practical. Unavoidable.

The wrong first question

So no, I don’t think the first question is: “Is AI unethical?”

I think the better question is:

What are we using AI for, what type of AI are we using, and who - or what - carries the cost?

Sovereignty & Agency first: Can we remember that we, the user and builder, have sovereignty to make and use it ethically?

Because AI ethics is not one thing.

It’s not a philosophical checkbox. It’s a system of considerations:

security: e.g. red teaming

agent identity

privacy & data sensitivity

copyright

bias

sustainability

Groundedness of answers to avoid hallucination

human accountability

governance guardrails

Explainability and traceability

And now, with LLMs and agents becoming easier for anyone to build that system is no longer abstract or centralised.

The part we don’t talk about enough

AI can be extraordinary. It can democratise capability.

It can help people organise ideas in minutes, reduce admin, build tools, code, analyse, and make sense of complexity. I won’t share the stats we see at work, but this matters to me.

As someone who’s dyslexic and too creatively chaotic, AI has levelled the playing field. Tools like Grammarly and AI have helped me organise my thinking and express ideas more clearly, without the quiet shame that can sometimes sit behind writing.

That’s the part of this story we shouldn’t lose.

It is democratising capabilities and the ability to create agents to solve our own problems. In the last month, I created two agents and won an AI hackathon (the latter with a team), and I am not a developer.

But there’s another side, and it became very obvious to me during the workshop.

We are starting to treat AI as if every problem requires the most powerful model available.

As if bigger = better.

As if faster = justified.

The sledgehammer problem

But not all AI is the same.

The Use case should drive the solution!!!

Not all large language models (LLMs) are the same.

Not all requests are equal.

People still don’t know the difference: Gen AI Vs Machine learning

A simple classification task, a search query, a workflow automation, a small language model (SLM), a traditional Machine Learning model - or even no AI at all - may be the better answer.

One thing was clear at the workshop, which was full of people using, creating and trying to make their business units successful by optimising AI, we forgot what AI is good for:

LLMs are strong at language, synthesis, reasoning, support and pattern exploration. But Small Language Models (SLM) exist too. But for maths-heavy prediction, classification or optimisation, traditional machine learning may still be the better tool.

For developers, the question will always be: no-code, mid-code, or pro-code, and then whether you need a model and what kind - there are 11,000+

Key point:

The use case should drive the AI, not the other way around. (Sorry - I can’t resist a Harry Potter analogy: “The Wand chooses the wizard Harry” - don’t jump to the flashy wand)

Using a powerful LLM for everything is like using a sledgehammer to tap in a nail. I mean, would you employ a ‘CEO’ to do all the jobs in a business?

The real cost?

And every time we do that, there is a cost. Not just financial.

The cost considerations:

Compute.

Energy.

Water.

Attention.

Infrastructure.

Trust.

We often talk about AI’s environmental impact in isolation, but context matters.

Data centres are a growing part of global energy demand, but they sit alongside much larger systems. The IEA projects data centre electricity consumption could reach just under 3% of global electricity demand by 2030, while buildings, transport and industry each account for much larger shares of global final energy use. Agriculture accounts for around 70% of global freshwater withdrawals.

So although data centres are not the primary global energy problem, they are a growing and visible one, and where they are located matters: they should not pull on scarce water resources in that region.

So the question is not: “AI uses resources, therefore AI is bad.”

The question is:

Is the compute replacing inefficiency, or amplifying unnecessary demand?

Where ethics actually live:

This is where AI ethics becomes real.

Not in theory. In decision-making. In design. In restraint.

AI should not become a novelty layer over everything. That is where governance, or Responsible AI (RAI), needs to happen.

It should not be used because it is shiny, fast, or impressive. It should be used where it genuinely improves access, quality, holistic prediction and patterns, safety, decision-making, or time. So you, the human, can be more creative with your solutions and prompts.

And yes — there are areas that deserve deeper scrutiny.

For me, one of those is AI image generation.

Questions around copyright, artist consent, likeness, style replication, misinformation, and the normalisation of synthetic content without clear labelling are not minor concerns.

>>They are foundational ones. Do we really know what the truth is these days?

(That is a whole different article)

[Funny, not funny side note:

I understand why Taylor Swift has been so vocal about image, voice, and likeness, and about stopping AI fakes. Someone has already tried to scam my parents by pretending to be me.]

The EU AI Act is moving in the right direction, with staged obligations around prohibited practices, AI literacy, GPAI models and high-risk systems, but legislation alone will not solve this; I worry that practical adoption and organisational readiness are not moving fast enough.

Organisations still need practical guardrails, clear ownership, model selection standards, grounding, evaluation, monitoring, human accountability and education. And it needs to move faster than I think is happening… but we’re getting there.

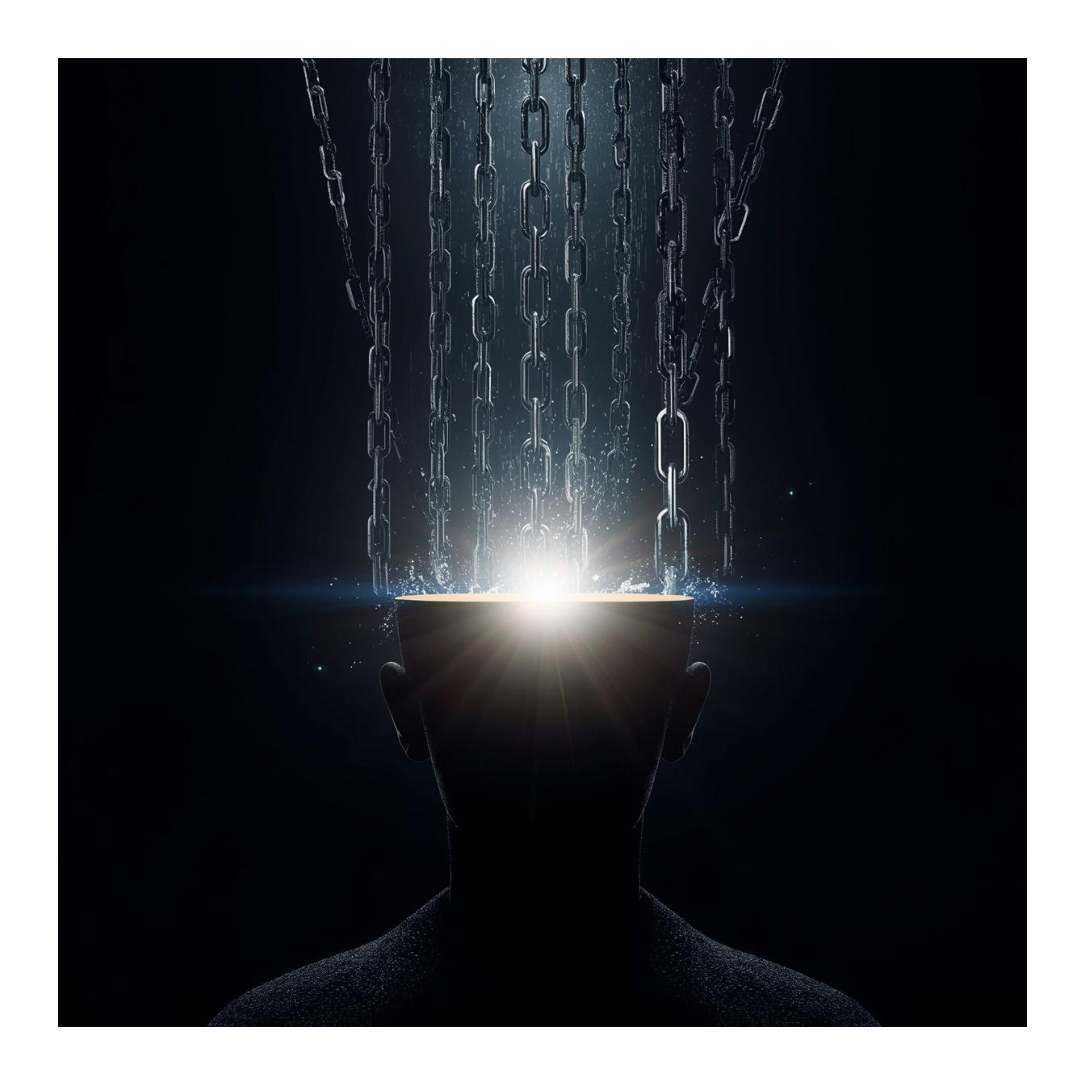

The part we don’t name enough: Agency & Authority

There’s something else I’ve been sitting with — and it only really clicked for me after being inside that workshop.

AI can start to feel like an authority.

Not because it’s conscious or all-knowing, but because it is fast, coherent, and often sounds certain. And that’s where it becomes risky.

Not because of what it is, but because of how easily we can start to relate to it, believe it, and become complacent.

Psychologists often talk about social agency - this is powerful and can be manipulated against us.

What is it?

We are humans, and give inanimate objects, like images or technology that communicates with us (e.g., Alexa), social agency. We start to give it human emotions, and the more we interact with it, the more we trust it, give it loyalty, and the more we become complacent. (We can also be manipulated in other ways, but let me stick to one variable here - complacency)

I’ve become increasingly vocal at work about challenging AI usage as a success metric when it isn’t balanced with discernment, governance and understanding of how the AI works.

We risk giving agency away if we don't check and ask whether the answers AI gives are right.

I spent 2 hours recently trying to build a business case on ‘facts’ the AI offered, only to abandon it all, as I realised it was so spurious and was using archived/draft-informed data that was archived for a reason.

Strings attached?

Be discerning and don’t be pulled into none-thinking.

Treat the agent like an apprentice or assistant.

They can get the answer wrong/half wrong, especially if they're programmed to be ‘creative’ (there is a creativity setting in the LLM) and programmed to be helpful. Sources can be dubious, and they're overconfident, and this is where guardrails and weightings are important to ground the answers. But for the user, all you can do is get curious about the answer you received, ask more creative questions, and, like a good student, double-check facts and references.

Developers:

If you're building Agents, how will you ground your content? RAG - Retrieval Augmented Generation needs work, and your Evaluation metrics need some thought. This is not trivial. Governance needs to be designed from the start of your journey, not as an afterthought - This is governance by design.

Users:

For me, AI sits in a similar space to any librarian or expert.

It can offer perspective.

It can accelerate learning.

It can expand how we see things.

But it should never replace our agency and discernment. Because the moment we stop questioning it - the moment we defer to it - we start to lose something essential.

Our judgement.

Our creativity.

Our sovereignty.

Where my two worlds meet

This is where my two worlds start to intersect.

On one side: Innovator and AI governance - Which create leaps forward for humanity & solve business problems + guardrails, accountability, responsibility.

On the other side: Inner work & awakening - awareness, creativity, empowered sovereignty + with compassionate curiosity and an open mind to create the life, joy and legacy you're here to create.

But both should be done ethically. Not for one, but for all.

And the truth is, no external framework can fully protect us if we’re not paying attention internally. This applies to AI, as well as any system or person in our lives.

We can build all the right controls - model selection, evaluation, monitoring, and regulation. But if we stop thinking, stop questioning, stop engaging critically, we bypass the very thing those systems are designed to protect.

AI can enhance creativity > But it should never replace it.

It can support thinking > But it should never do the thinking for us.

That line between assistance and surrender is where the real ethics live.

A simple personal rule

So my approach has become simpler:

Learn from it.

Challenge it.

Use it to expand your thinking.

But don’t give your agency away to it. Because the risk isn’t just that AI gets things wrong.

It’s that we start to believe it’s right without doing the work of thinking for ourselves.

A final note to creators, builders, and thinkers

I understand the fear from writers and creatives. But I don’t think the answer is to abandon AI. I think the answer is to use it without giving up your sovereignty.

Use it like an assistant, not an oracle.

Use it like a detective partner.

Ask better questions.

Check sources.

Challenge the output.

Notice what it misses.

Stop at its 3rd prompt - don’t be time-hijacked as it leads you down a rabbit hole.

Bring your own judgement, taste, lived experience & apply your own integrity and ethics, so your intuition stays alive and is not misdirected by AI’s personalised answers, which make you feel special and therefore, right.

So, is AI unethical?

Not inherently. But careless AI is.

Unaccountable AI is. Wasteful AI is.

Using a powerful tool without intention is not innovation. It’s poor design.

Replacing human creativity instead of supporting it is not progress. It’s a failure of imagination.

The future of AI ethics is not just “can we use it?”

It is:

Should we use it?

Which tool is right?

What is the cost?

Who benefits?

Who is harmed?

And where must the human stay firmly in the loop?

So to conclude:

AI isn’t inherently unethical. Careless AI is. The real ethics live in agency, discernment, right-tool-for-the-job decisions, and refusing to surrender our human judgment.

Sorry, this was a long one. I hope you found it helpful. Let me know your thoughts.

WIth Moonbeams

Nila

The Orginal article on Ethics